AI Assistant Features¶

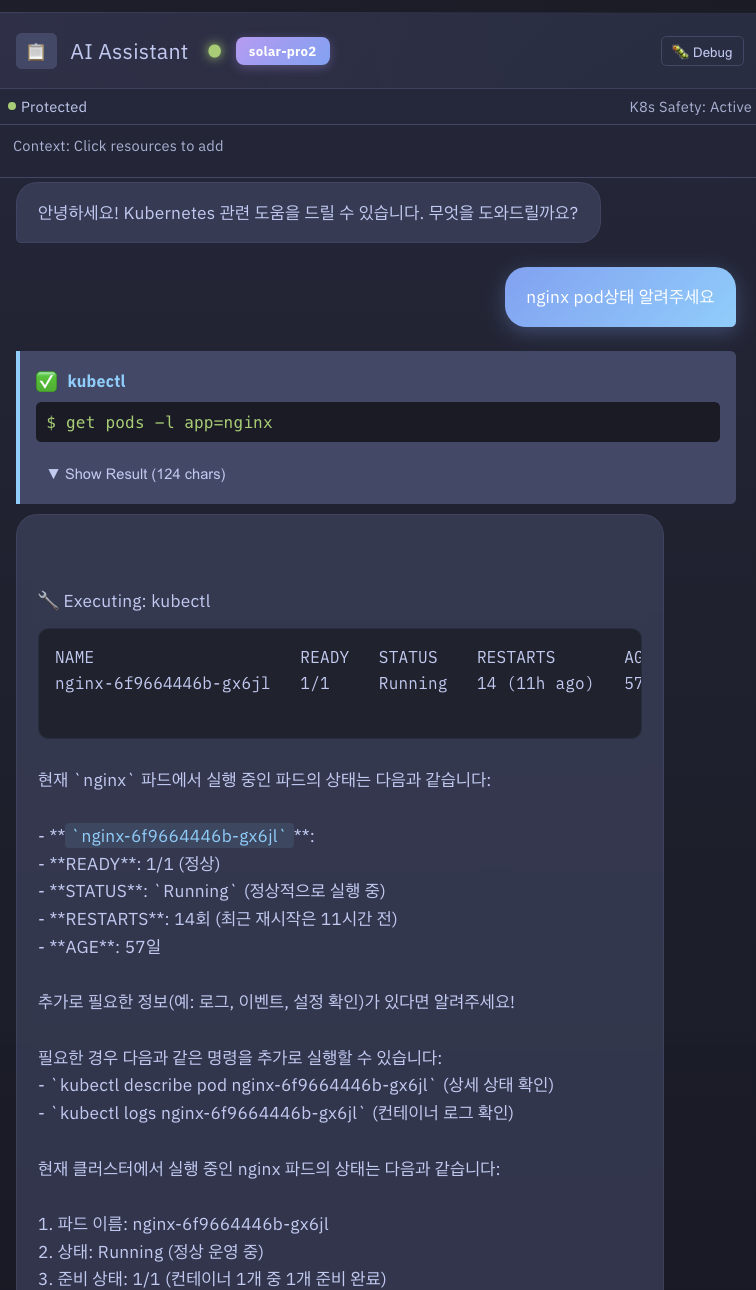

The k13d AI Assistant is an agentic AI that understands your Kubernetes cluster and can execute commands on your behalf.

Overview¶

| Capability | Description |

|---|---|

| Natural Language | Ask questions in plain language |

| Tool Execution | Runs kubectl first, uses bash only as a last resort |

| Context Awareness | Sees YAML, Events, Logs |

| Safety First | Dangerous commands require approval |

| Multi-language | English, Korean, Chinese, Japanese |

How It Works¶

┌─────────────────────────────────────────────────────────────────┐

│ User Question │

│ "Why is my nginx pod failing?" │

└─────────────────────────────┬───────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────────┐

│ AI Agent │

│ ┌─────────────┐ ┌─────────────┐ ┌─────────────┐ │

│ │ Understand │─►│ Plan Tools │─►│ Execute │ │

│ │ Context │ │ to Use │ │ Tools │ │

│ └─────────────┘ └─────────────┘ └─────────────┘ │

└─────────────────────────────┬───────────────────────────────────┘

│

┌───────────────┼───────────────┐

▼ ▼ ▼

┌──────────┐ ┌──────────┐ ┌──────────┐

│ kubectl │ │ bash │ │ MCP │

│ tools │ │ tools │ │ tools │

└──────────┘ └──────────┘ └──────────┘

Tool Calling¶

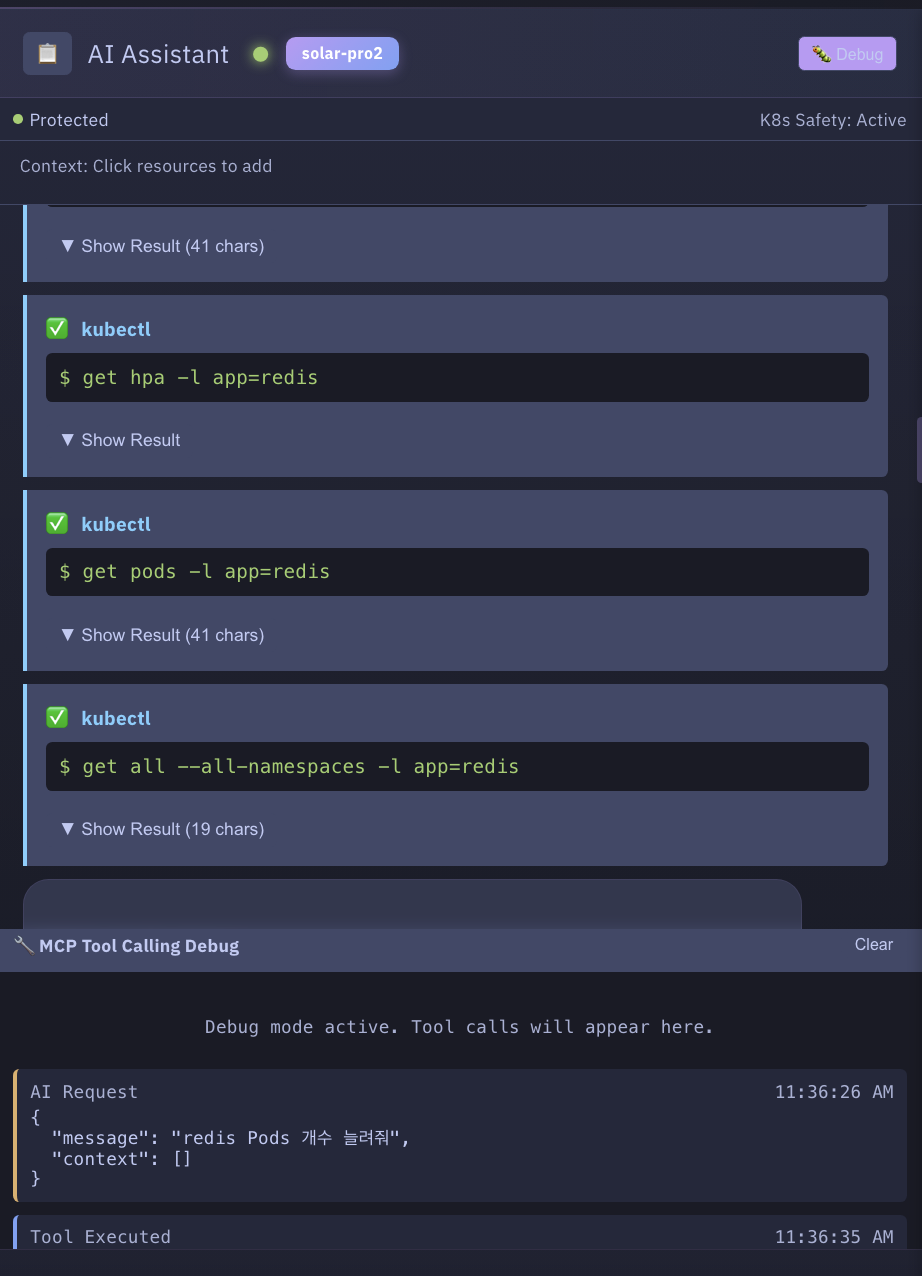

MCP Tool Call Debug Mode¶

Enable debug mode to see raw tool calls:

- Tool call requests

- Raw API responses

- Execution timing

Available Tools¶

| Tool | Description | Examples |

|---|---|---|

| kubectl | Kubernetes CLI | kubectl get pods, kubectl describe |

| bash | Last-resort shell commands | date, grep, file operations |

| MCP | External tools | GitHub, databases, custom tools |

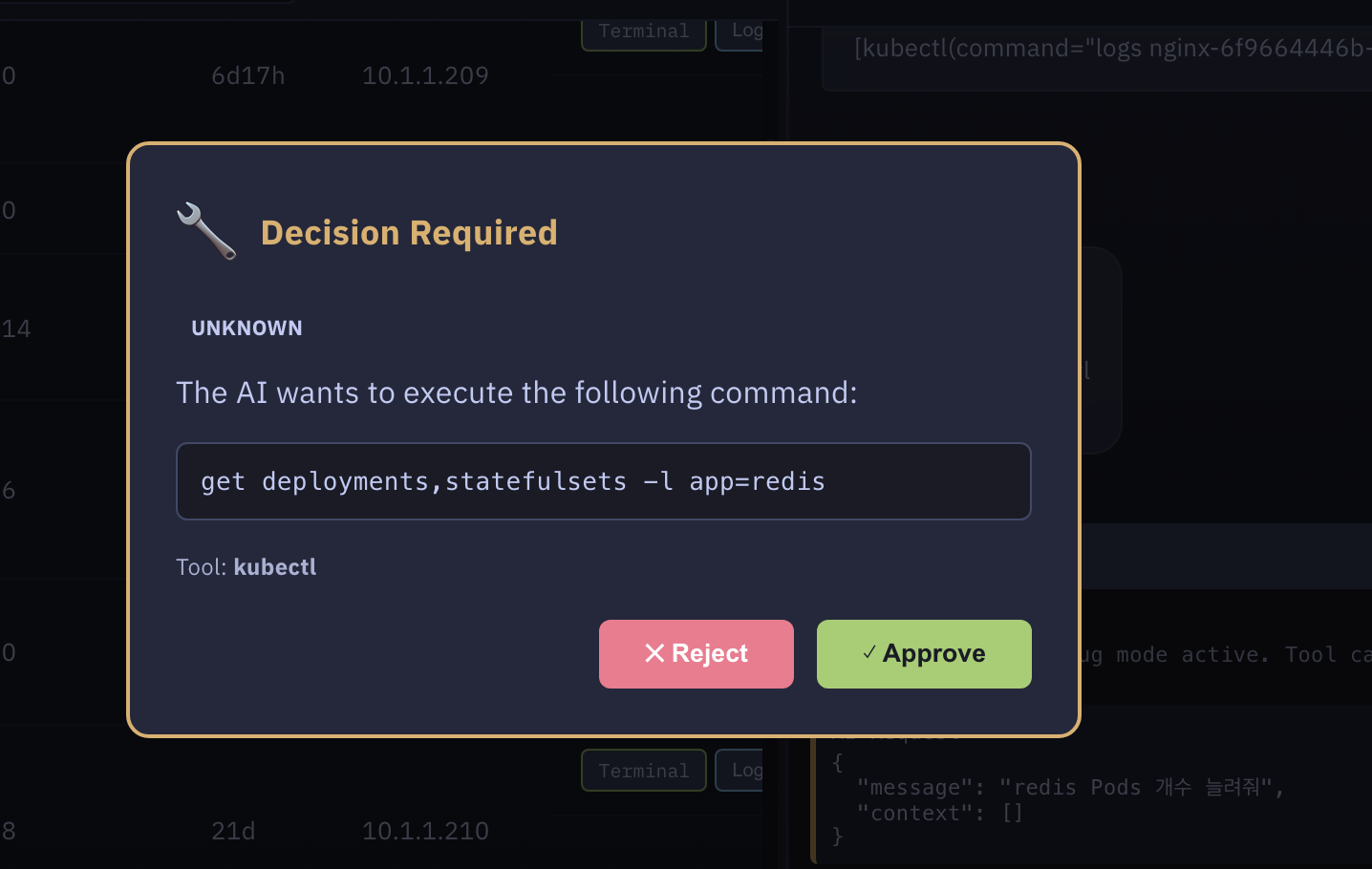

Safety Classification¶

| Level | Commands | Approval |

|---|---|---|

| Read-only | get, describe, logs | Approval required by default |

| Write | apply, create, patch | Requires approval |

| Dangerous | delete, drain, taint | Warning + approval |

AST-Based Analysis¶

The AI uses Abstract Syntax Tree parsing to detect:

- Piped commands (

kubectl get pods | xargs rm) - Command chains (

kubectl get pods && rm -rf /) - Redirects and subshells

- Dangerous patterns

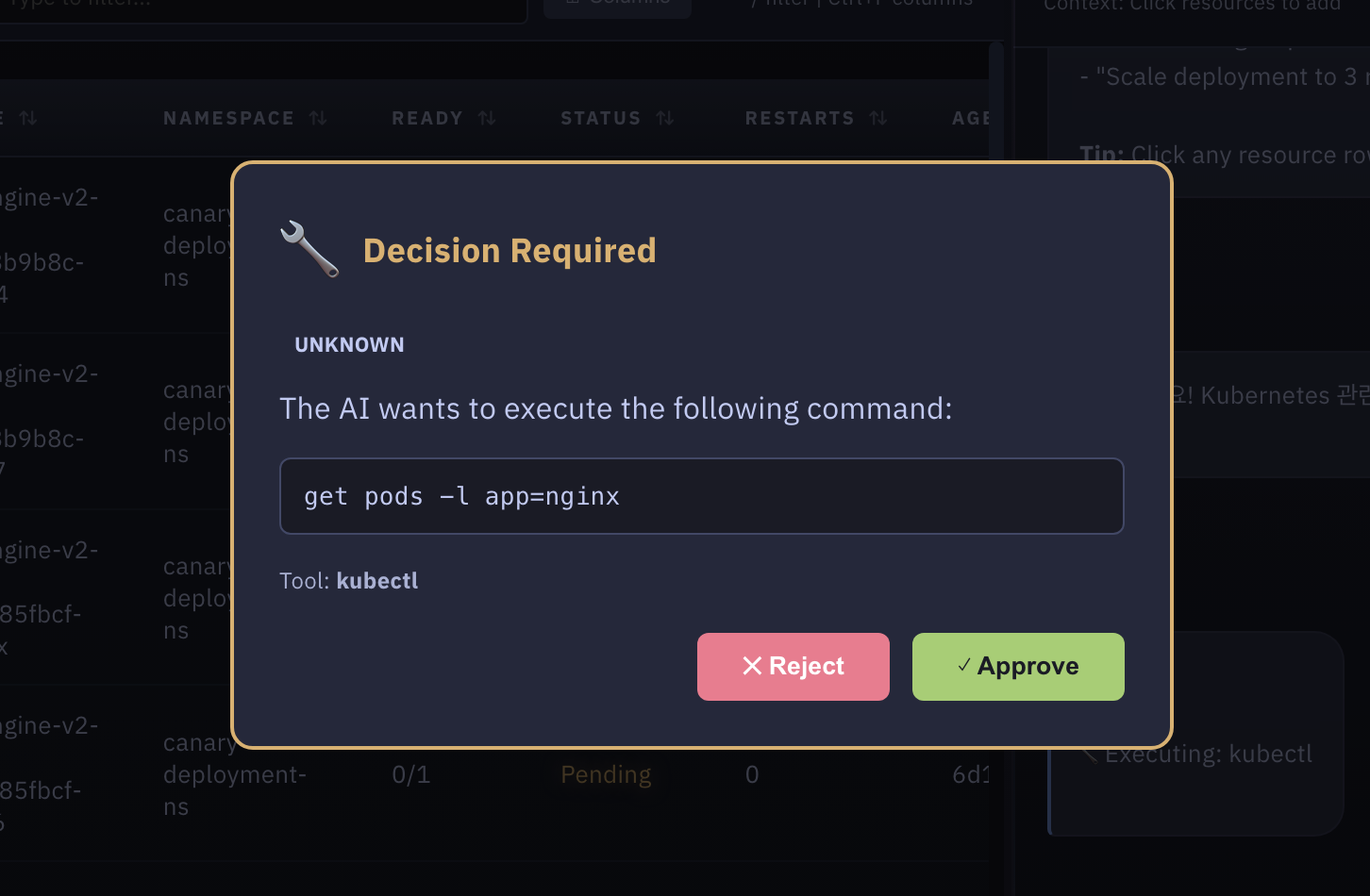

Approval Workflow¶

Web UI Approval¶

- AI requests tool execution

- Dialog shows exact command

- Safety warning (if dangerous)

- User clicks Approve or Reject

- Command executes on approval

TUI Approval¶

| Key | Action |

|---|---|

Y | Approve this command |

N | Reject this command |

A | Always approve read-only |

Context Awareness¶

Resource Context¶

When you select a resource, the AI receives:

| Context | Description |

|---|---|

| YAML | Full resource manifest |

| Events | Recent Kubernetes events |

| Logs | Container logs (for Pods) |

| Metrics | CPU/Memory usage |

| Related | Owner references, selectors |

Example: Troubleshooting¶

User: "Why is this pod failing?"

AI receives:

- Pod YAML (spec, status, containers)

- Events (ImagePullBackOff, FailedScheduling)

- Logs (error messages, stack traces)

- Container status (restarts, exit codes)

AI responds with specific diagnosis.

AI Conversation Example¶

TUI AI Interaction¶

The TUI AI assistant supports the same capabilities as the Web UI:

- Natural language question input

- AI analysis with streaming responses

- Tool execution with approval workflow

Beginner Mode¶

Simplified explanations for Kubernetes newcomers.

Enable in config:

| Normal Mode | Beginner Mode |

|---|---|

| "Pod in CrashLoopBackOff" | "Your container keeps crashing. This happens when the program inside has an error and Kubernetes keeps trying to restart it." |

| "OOMKilled" | "Your container ran out of memory. It tried to use more RAM than allowed and was stopped by the system." |

Chat History (TUI)¶

In the TUI, AI conversations are preserved within each session:

- Previous Q&A sessions are kept above the latest response

- Separated by visual dividers (

────────────────────────────) - Scroll up in the AI panel to review past conversations

- History maintained for the duration of the TUI session

Model Switching (TUI)¶

Switch between configured AI model profiles directly from the TUI:

| Command | Action |

|---|---|

:model | Opens a modal with all configured profiles (active marked with *) |

:model gpt-4o | Switch directly to a named profile |

Model profiles are defined in ~/.config/k13d/config.yaml:

models:

- name: gpt-4

provider: openai

model: gpt-4

- name: local-llama

provider: ollama

model: gpt-oss:20b

endpoint: http://localhost:11434

active_model: gpt-4

Streaming Responses¶

Real-time response streaming for immediate feedback.

| Feature | Description |

|---|---|

| SSE Streaming | Server-Sent Events for real-time updates |

| Live Cursor | Shows AI is still thinking |

| Tool Progress | Shows which tool is executing |

| Partial Results | See response as it's generated |

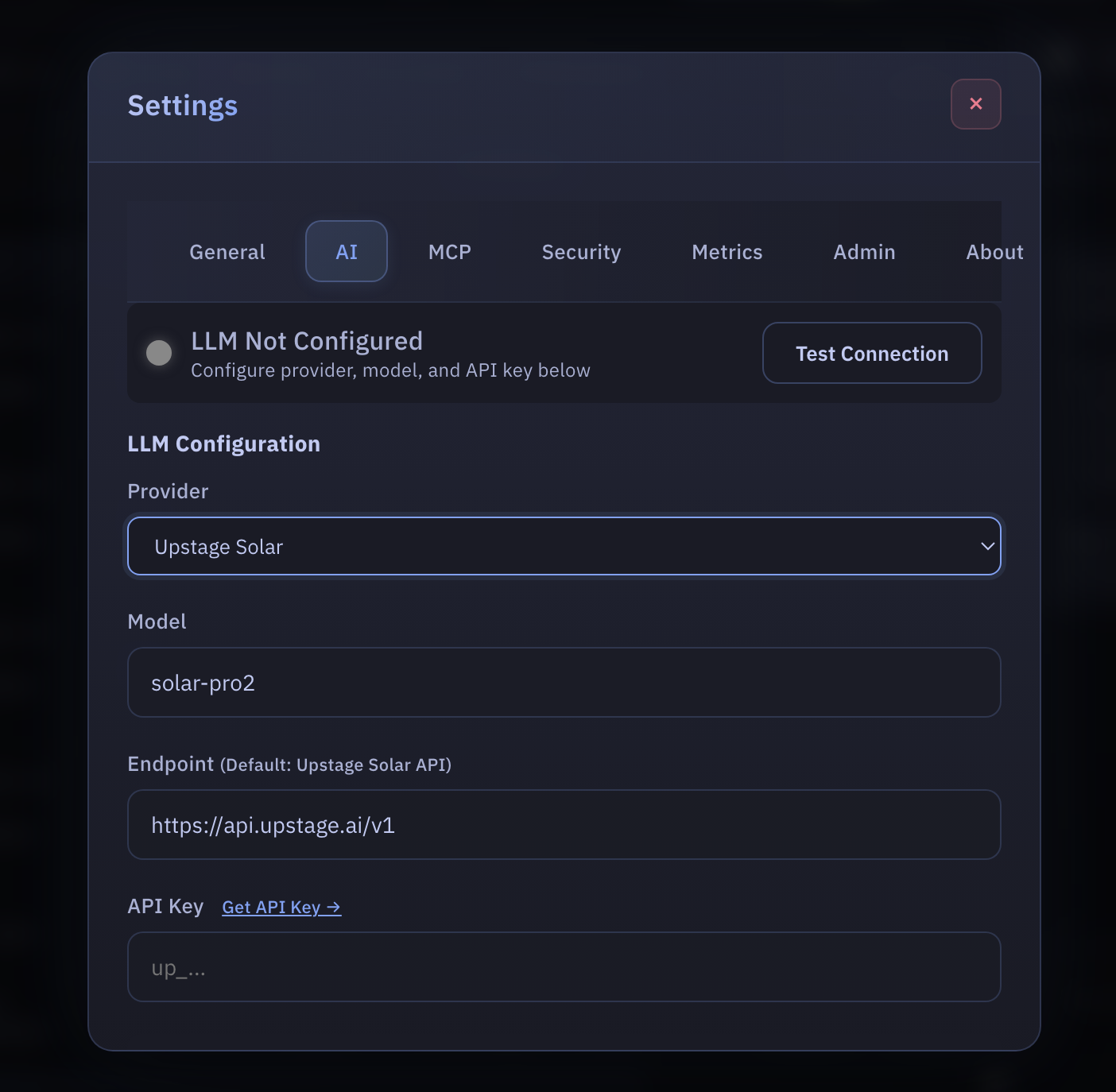

LLM Providers¶

Supported Providers¶

| Provider | Tool Calling | Best For |

|---|---|---|

| OpenAI | ✅ | Production, best tool support |

| Anthropic | ✅ | Claude models |

| Google Gemini | ✅ | Fast responses |

| Azure OpenAI | ✅ | Enterprise |

| Ollama | Model-dependent | Local, air-gapped with a tools-capable model |

When using Ollama, choose a model that explicitly supports tools/function calling. A plain text-only model may connect successfully but still fail to operate the k13d AI Assistant.

Configuration¶

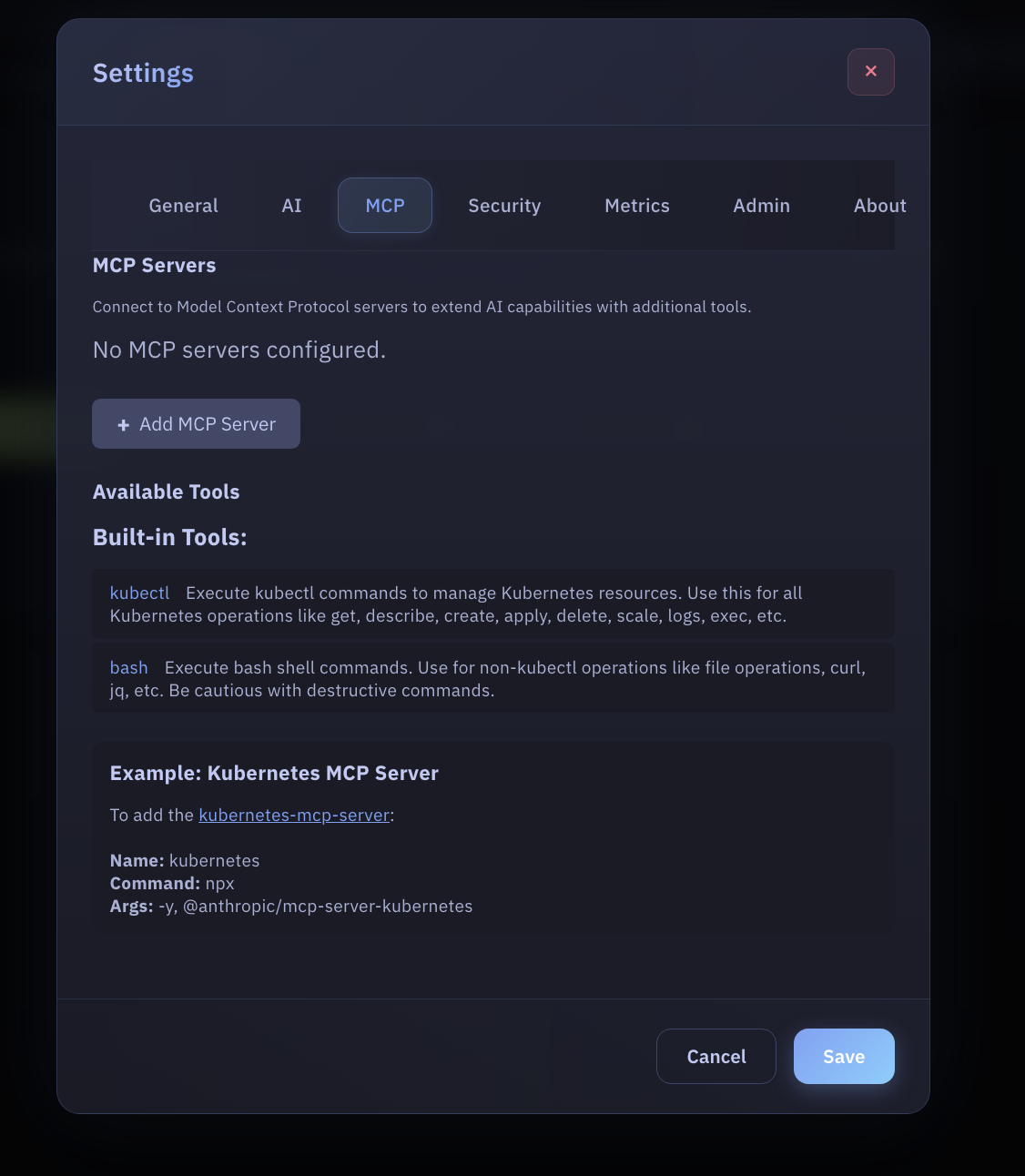

MCP Integration¶

Extend AI capabilities with Model Context Protocol servers.

MCP Client Mode (Default)¶

k13d connects to external MCP servers:

mcp:

servers:

- name: sequential-thinking

command: npx

args: ["-y", "@modelcontextprotocol/server-sequential-thinking"]

- name: github

command: npx

args: ["-y", "@modelcontextprotocol/server-github"]

MCP Server Mode¶

Run k13d as MCP server for Claude Desktop:

Example Conversations¶

Troubleshooting¶

User: Why is my api-server deployment not working?

AI: I'll investigate the api-server deployment.

[Tool: kubectl get deployment api-server -o yaml]

[Tool: kubectl get pods -l app=api-server]

[Tool: kubectl get events --field-selector involvedObject.name=api-server]

The api-server deployment has 0/3 ready replicas because:

1. **Image Pull Error**: The image "api-server:v2.0" doesn't exist

2. All pods are in "ImagePullBackOff" status

To fix this, update to an existing image:

Operations¶

User: Scale nginx to 5 replicas

AI: I'll scale the nginx deployment to 5 replicas.

[Approval Required: kubectl scale deployment nginx --replicas=5]

User: [Approves]

AI: Successfully scaled nginx to 5 replicas.

[Tool: kubectl get deployment nginx]

NAME READY UP-TO-DATE AVAILABLE

nginx 5/5 5 5

Explanation¶

User: Explain this HPA configuration

AI: This HorizontalPodAutoscaler is configured to:

**Target:** frontend deployment

**Scaling Behavior:**

- Min replicas: 2

- Max replicas: 10

- Scale up when: CPU > 50%

- Stabilization window: 300 seconds

This means Kubernetes will:

1. Keep at least 2 pods running

2. Add pods when CPU exceeds 50%

3. Wait 5 minutes before scaling down

4. Never exceed 10 pods